What is Framerate?

Framerate is the frequency at which images appear on a screen or display; (modern) displays work by displaying a series of frames (basically ‘still’ images) in rapid succession, causing our visual system to see motion. Framerate is expressed in Hz (Hertz), or FPS (Frames Per Second); e.g. a 60Hz monitor will display 60 frames per second.

Framerate is important in gaming for a number of reasons, most importantly for fluidity. The higher the framerate, the more fluent the image on the screen becomes, thus making it easier and more natural to track fast moving objects on the screen.

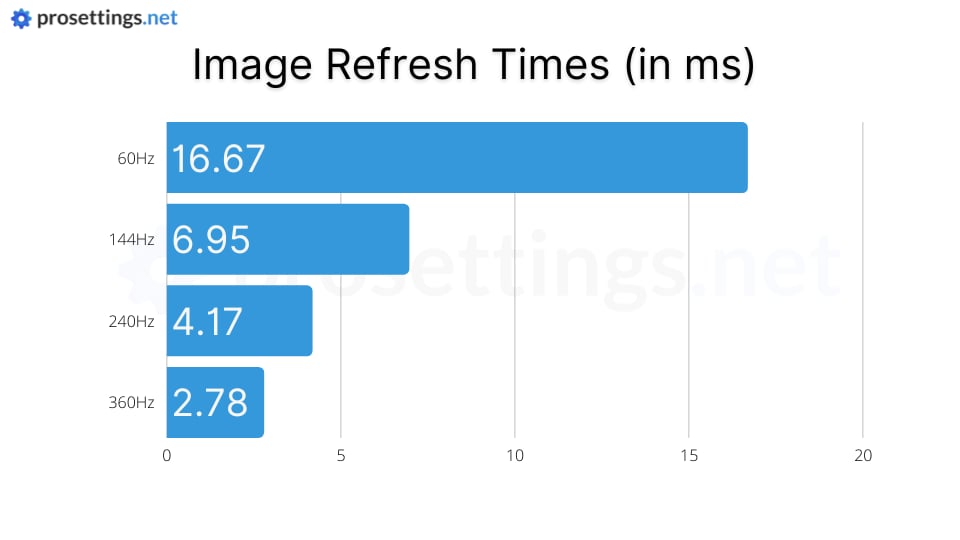

The above image shows why higher refresh rate monitors have become the standard in the competitive gaming scene. A regular 60Hz display (which is by far the most common refresh rate for displays these days) can refresh the image 60 times per second at most, so even if your GPU is capable of rendering far more FPS you’ll still only see 60 images per second. A 144Hz monitor will more than double the amount of images displayed per second (provided your hardware can push enough frames, having the hardware to do so is an important consideration when buying a high FPS display), resulting in a much clearer and more fluid image.

The image below clearly illustrates this: at 60Hz, a moving object in the game world can come across as choppy, almost as if it’s ‘teleporting’ from one position to another. This can greatly reduce your ability to track fast moving players or objects in the game. At higher framerates, these fast moving game objects are shown in a much more fluent manner due to the fact that the display updates their position more often.

Modern gaming monitors go up to 360Hz, providing exceptional clarity and fluidity. Some people claim (jokingly so, in most cases) that the human eye cannot see over 30 frames per second but these claims are false and originate from an internet meme. Gaming on a higher refresh rate monitor won’t necessarily make you a better player, but the effect is instantly noticeable, though with diminishing returns. Going from 60 FPS to 144 FPS will be a world of difference and might even make it uncomfortable to play a fast paced game on a regular 60Hz monitor, whilst going from 144Hz to 240Hz will be a less eye-opening experience (though the difference is still noticeable for most people).

I have a 144 hz laptop screen. Configuration is amd ryzen 7 6800h and an rtx 3050.it produces an average of 200+ frames in competitive and 300+ in deathmatch. Players dissappear and appear sometimes on screen. Should I framecap it. If so at what limit.

Disappearing enemies normally don’t have anything to do with framerates; I would make sure your GPU drivers are up to date and that you’re not running the game at too high settings as a starting point.

If ur moniter only runs 60hz and says ur running 100 are u still running 60 and just says 100 or is it that it can run 100?

Some monitors can be overclocked, but you can’t make a 60Hz monitor display 240 frames per second, for example. Your game might be generating those amounts of frames (which is what the framerate indicator in games indicates: it’s how many frames the game is pushing) but your monitor won’t be able to display all of them.

Will an rtx 2070 provide decent fortnite performance?

Yes, that should definitely provide you with enough frames (if the rest of your build is up to par of course) to play the game properly.

Do I need a 240 hz monitor to run 240 frames

You need a 240Hz monitor to ‘see’ 240 frames, yes. Producing 240 frames (or more, or less) is done by the PC.

Hello,

Concerning the configuration on warzone.

I have an RTX 2080 Ti graphics card, but my screen monitor only refreshes at 75 Hz.

Should I limit the number of framerate limits in the game at 75, or can I set it to unlimited ? or other ?

Thank you for your help.

We usually recommend leaving the framerate uncapped, though if you feel like there are too many fluctuations or it influences the fluidity of your game you can always cap it at 75.